aclnnBatchNormBackward

接口原型

每个算子有两段接口,必须先调用“aclnnXxxGetWorkspaceSize”接口获取入参并根据计算流程计算所需workspace大小,再调用“aclnnXxx”接口执行计算。两段式接口如下:

- 第一段接口:aclnnStatus aclnnBatchNormBackwardGetWorkspaceSize(const aclTensor *gradOut, const aclTensor *input, const aclTensor *weight, const aclTensor *runningMean, const aclTensor *runningVar, const aclTensor *saveMean, const aclTensor *saveInvstd, bool training, double eps, const aclBoolArray *outputMask, aclTensor *gradInput, aclTensor *gradWeight, aclTensor *gradBias, uint64_t *workspaceSize, aclOpExecutor **executor)

- 第二段接口:aclnnStatus aclnnBatchNormBackward(void *workspace, uint64_t workspaceSize, aclOpExecutor *executor, const aclrtStream stream)

功能描述

- 算子功能:aclnnBatchNorm的反向计算。

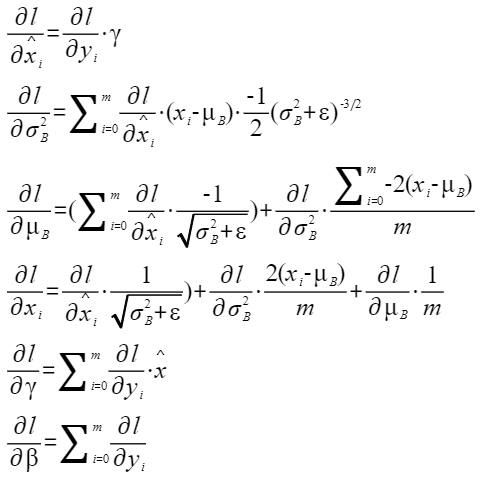

- 计算公式:

aclnnBatchNormBackwardGetWorkspaceSize

- 接口定义:

aclnnStatus aclnnBatchNormBackwardGetWorkspaceSize(const aclTensor *gradOut, const aclTensor *input, const aclTensor *weight, const aclTensor *runningMean, const aclTensor *runningVar, const aclTensor *saveMean, const aclTensor *saveInvstd, bool training, double eps, const aclBoolArray *outputMask, aclTensor *gradInput, aclTensor *gradWeight, aclTensor *gradBias, uint64_t *workspaceSize, aclOpExecutor **executor)

- 参数说明:

- gradOut:Device侧的aclTensor,数据类型仅支持FLOAT、FLOAT16,支持非连续的Tensor,支持的shape和格式有:二维(对应格式为NC)、三维(对应的格式为NCL)、四维(对应的格式为NCHW)、五维(对应的格式为NCDHW)。

- input:Device侧的aclTensor,数据类型仅支持FLOAT、FLOAT16,支持非连续的Tensor,支持的shape和格式有:二维(对应格式为NC)、三维(对应的格式为NCL)、四维(对应的格式为NCHW)、五维(对应的格式为NCDHW)。

- weight:可选参数,Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- runningMean:可选参数,Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- runningVar:可选参数,Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- saveMean:可选参数,Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- saveInvstd:可选参数,Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- training:Host侧的bool值,标记是否训练场景,True表示训练场景,False表示推理场景。

- eps:Host侧的double值,用于防止分母为0。

- outputMask:aclBoolArray类型,输出的掩码。3个bool类型的标记,分别表示是否计算gradInput、gradWeight、gradBias3个输出。

- gradInput:Device侧的aclTensor,数据类型与input一致,支持非连续的Tensor,支持的shape和格式有:二维(对应格式为NC)、三维(对应的格式为NCL)、四维(对应的格式为NCHW)、五维(对应的格式为NCDHW)。

- gradWeight:Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- gradBias:Device侧的aclTensor,数据类型仅支持FLOAT,支持非连续的Tensor,数据格式为ND。shape为1维,长度与input入参中C轴的长度相等。

- workspaceSize:返回用户需要在Device侧申请的workspace大小。

- executor:返回op执行器,包含了算子计算流程。

- 返回值:

返回aclnnStatus状态码,具体参见aclnn返回码。

第一段接口完成入参校验,出现以下场景时报错:

- 返回161001(ACLNN_ERR_PARAM_NULLPTR): 传入的指针类型入参是空指针。

- 返回161002(ACLNN_ERR_PARAM_INVALID):

- 参数input,gradOut,gradInput数据类型和数据格式不在支持的范围内。

- 参数input,gradOut,gradInput数据的shape不在支持的范围内。

aclnnBatchNormBackward

- 接口定义:

aclnnStatus aclnnBatchNormBackward(void *workspace, uint64_t workspaceSize, aclOpExecutor *executor, const aclrtStream stream)

- 参数说明:

- workspace:在Device侧申请的workspace内存起址。

- workspaceSize:在Device侧申请的workspace大小,由第一段接口aclnnBatchNormBackwardGetWorkspaceSize获取。

- executor:op执行器,包含了算子计算流程。

- stream:指定执行任务的AscendCL stream流。

- 返回值:

返回aclnnStatus状态码,具体参见aclnn返回码。

调用示例

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 | #include <iostream> #include <vector> #include "acl/acl.h" #include "aclnnop/aclnn_batch_norm_backward.h" #define CHECK_RET(cond, return_expr) \ do { \ if (!(cond)) { \ return_expr; \ } \ } while (0) #define LOG_PRINT(message, ...) \ do { \ printf(message, ##__VA_ARGS__); \ } while (0) int64_t GetShapeSize(const std::vector<int64_t>& shape) { int64_t shape_size = 1; for (auto i : shape) { shape_size *= i; } return shape_size; } int Init(int32_t deviceId, aclrtContext* context, aclrtStream* stream) { // 固定写法,AscendCL初始化 auto ret = aclInit(nullptr); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclInit failed. ERROR: %d\n", ret); return ret); ret = aclrtSetDevice(deviceId); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtSetDevice failed. ERROR: %d\n", ret); return ret); ret = aclrtCreateContext(context, deviceId); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtCreateContext failed. ERROR: %d\n", ret); return ret); ret = aclrtSetCurrentContext(*context); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtSetCurrentContext failed. ERROR: %d\n", ret); return ret); ret = aclrtCreateStream(stream); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtCreateStream failed. ERROR: %d\n", ret); return ret); return 0; } template <typename T> int CreateAclTensor(const std::vector<T>& hostData, const std::vector<int64_t>& shape, void** deviceAddr, aclDataType dataType, aclTensor** tensor) { auto size = GetShapeSize(shape) * sizeof(T); // 调用aclrtMalloc申请device侧内存 auto ret = aclrtMalloc(deviceAddr, size, ACL_MEM_MALLOC_HUGE_FIRST); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtMalloc failed. ERROR: %d\n", ret); return ret); // 调用aclrtMemcpy将Host侧数据拷贝到device侧内存上 ret = aclrtMemcpy(*deviceAddr, size, hostData.data(), size, ACL_MEMCPY_HOST_TO_DEVICE); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtMemcpy failed. ERROR: %d\n", ret); return ret); // 计算连续tensor的strides std::vector<int64_t> strides(shape.size(), 1); for (int64_t i = shape.size() - 2; i >= 0; i--) { strides[i] = shape[i + 1] * strides[i + 1]; } // 调用aclCreateTensor接口创建aclTensor *tensor = aclCreateTensor(shape.data(), shape.size(), dataType, strides.data(), 0, aclFormat::ACL_FORMAT_ND, shape.data(), shape.size(), *deviceAddr); return 0; } int main() { // 1. (固定写法)device/context/stream初始化, 参考AscendCL对外接口列表 // 根据自己的实际device填写deviceId int32_t deviceId = 0; aclrtContext context; aclrtStream stream; auto ret = Init(deviceId, &context, &stream); // check根据自己的需要处理 CHECK_RET(ret == 0, LOG_PRINT("Init acl failed. ERROR: %d\n", ret); return ret); // 2. 构造输入与输出,需要根据API的接口自定义构造 std::vector<int64_t> inputShape = {2, 3, 2}; std::vector<int64_t> meanShape = {3}; void* gradOutDeviceAddr = nullptr; void* inputDeviceAddr = nullptr; void* weightDeviceAddr = nullptr; void* runningMeanDeviceAddr = nullptr; void* runningVarDeviceAddr = nullptr; void* saveMeanDeviceAddr = nullptr; void* saveInvstdDeviceAddr = nullptr; void* gradInputDeviceAddr = nullptr; void* gradWeightDeviceAddr = nullptr; void* gradBiasDeviceAddr = nullptr; aclTensor* gradOut = nullptr; aclTensor* input = nullptr; aclTensor* weight = nullptr; aclTensor* runningMean = nullptr; aclTensor* runningVar = nullptr; aclTensor* saveMean = nullptr; aclTensor* saveInvstd = nullptr; aclTensor* gradInput = nullptr; aclTensor* gradWeight = nullptr; aclTensor* gradBias = nullptr; std::vector<float> inputHostData = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11}; std::vector<float> zeroHostData = {0, 0, 0}; std::vector<float> oneHostData = {1, 1, 1}; // 创建gradOut aclTensor ret = CreateAclTensor(inputHostData, inputShape, &gradOutDeviceAddr, aclDataType::ACL_FLOAT, &gradOut); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建input aclTensor ret = CreateAclTensor(inputHostData, inputShape, &inputDeviceAddr, aclDataType::ACL_FLOAT, &input); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建weight aclTensor ret = CreateAclTensor(oneHostData, meanShape, &weightDeviceAddr, aclDataType::ACL_FLOAT, &weight); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建runningMean aclTensor ret = CreateAclTensor(zeroHostData, meanShape, &runningMeanDeviceAddr, aclDataType::ACL_FLOAT, &runningMean); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建runningVar aclTensor ret = CreateAclTensor(oneHostData, meanShape, &runningVarDeviceAddr, aclDataType::ACL_FLOAT, &runningVar); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建saveMean aclTensor ret = CreateAclTensor(zeroHostData, meanShape, &saveMeanDeviceAddr, aclDataType::ACL_FLOAT, &saveMean); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建saveInvstd aclTensor ret = CreateAclTensor(zeroHostData, meanShape, &saveInvstdDeviceAddr, aclDataType::ACL_FLOAT, &saveInvstd); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建gradInput aclTensor ret = CreateAclTensor(inputHostData, inputShape, &gradInputDeviceAddr, aclDataType::ACL_FLOAT, &gradInput); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建gradWeight aclTensor ret = CreateAclTensor(zeroHostData, meanShape, &gradWeightDeviceAddr, aclDataType::ACL_FLOAT, &gradWeight); CHECK_RET(ret == ACL_SUCCESS, return ret); // 创建gradBias aclTensor ret = CreateAclTensor(zeroHostData, meanShape, &gradBiasDeviceAddr, aclDataType::ACL_FLOAT, &gradBias); CHECK_RET(ret == ACL_SUCCESS, return ret); std::array<bool, 3> value = {true, true, true}; auto outputMask = aclCreateBoolArray(value.data(), value.size()); // 3.调用CANN算子库API,需要修改为具体的算子接口 uint64_t workspaceSize = 0; aclOpExecutor* executor; // 调用aclnnBatchNormBackward第一段接口 ret = aclnnBatchNormBackwardGetWorkspaceSize(gradOut, input, weight, runningMean, runningVar, saveMean, saveInvstd, true, 1e-5, outputMask, gradInput, gradWeight, gradBias, &workspaceSize, &executor); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclnnBatchNormBackwardGetWorkspaceSize failed. ERROR: %d\n", ret); return ret); // 根据第一段接口计算出的workspaceSize申请device内存 void* workspaceAddr = nullptr; if (workspaceSize > 0) { ret = aclrtMalloc(&workspaceAddr, workspaceSize, ACL_MEM_MALLOC_HUGE_FIRST); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("allocate workspace failed. ERROR: %d\n", ret); return ret;); } // 调用aclnnBatchNormBackward第二段接口 ret = aclnnBatchNormBackward(workspaceAddr, workspaceSize, executor, stream); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclnnBatchNormBackward failed. ERROR: %d\n", ret); return ret); // 4. (固定写法)同步等待任务执行结束 ret = aclrtSynchronizeStream(stream); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("aclrtSynchronizeStream failed. ERROR: %d\n", ret); return ret); // 5. 获取输出的值,将device侧内存上的结果拷贝至Host侧,需要根据具体API的接口定义修改 auto size = GetShapeSize(inputShape); std::vector<float> resultData(size, 0); ret = aclrtMemcpy(resultData.data(), resultData.size() * sizeof(resultData[0]), gradInput, size * sizeof(float), ACL_MEMCPY_DEVICE_TO_HOST); CHECK_RET(ret == ACL_SUCCESS, LOG_PRINT("copy result from device to host failed. ERROR: %d\n", ret); return ret); for (int64_t i = 0; i < size; i++) { LOG_PRINT("result[%ld] is: %f\n", i, resultData[i]); } // 6. 释放aclTensor和aclScalar,需要根据具体API的接口定义修改 aclDestroyTensor(gradOut); aclDestroyTensor(input); aclDestroyTensor(weight); aclDestroyTensor(runningMean); aclDestroyTensor(runningVar); aclDestroyTensor(saveMean); aclDestroyTensor(saveInvstd); aclDestroyTensor(gradInput); aclDestroyTensor(gradWeight); aclDestroyTensor(gradBias); return 0; } |

父主题: NN类算子接口